DEEPaaS API¶

The DEEPaaS API enables a user friendly interaction with the underlying Deep Learning modules and can be used both for training models and doing inference with services.

For a detailed up-to-date documentation please refer to the official DEEPaaS documentation.

Integrate your model with the API¶

Tip

The best approach to integrate your code with DEEPaaS is to create an empty template using the AI4OS Modules Template. Once the template is created, move your code inside your package and define the API methods that will interface with your existing code.

To make your Deep Learning model compatible with the DEEPaaS API you have to:

1. Define the API methods for your model¶

Create a Python file (named for example api.py) inside your package. In this file you can define any of the API methods. You don’t need to define all the methods, just the ones you need. Every other method will return a NotImplementError when queried from the API. For example:

Enable prediction: implement

get_predict_argsandpredict.Enable training: implement

get_train_argsandtrain.Enable model weights preloading: implement

warm.Enable model info: implement

get_metadata.

If you don’t feel like reading the DEEPaaS docs (which you should), here are some examples of files you can quickly drawn inspiration from:

returning a JSON response for

predict().returning a file (eg. image, zip, etc) for

predict().a more complex example which also includes

train()with monitoring.

Tip

Try to keep you module’s code as decoupled as possible from DEEPaaS code, so that any future changes in the API are easy to integrate. This means that the predict() in api.py should mostly be an interface to your true predict function. In pseudocode:

#api.py

import utils # eg. this is where your true predict function is

def predict(**kwargs):

args = preprocess(kwargs) # transform deepaas input to your standard input

out = utils.predict(args) # make prediction

resp = postprocess(out) # transform your standard output to deepaas output

return resp

2. Define the entrypoints to your model¶

You must define the entrypoints pointing to this file in the setup.cfg as following:

[entry_points]

deepaas.v2.model =

pkg_name = pkg_name.api

Here is an example of the entrypoint definition in the setup.cfg file.

Running the API¶

To start the API run:

deepaas-run --listen-ip 0.0.0.0

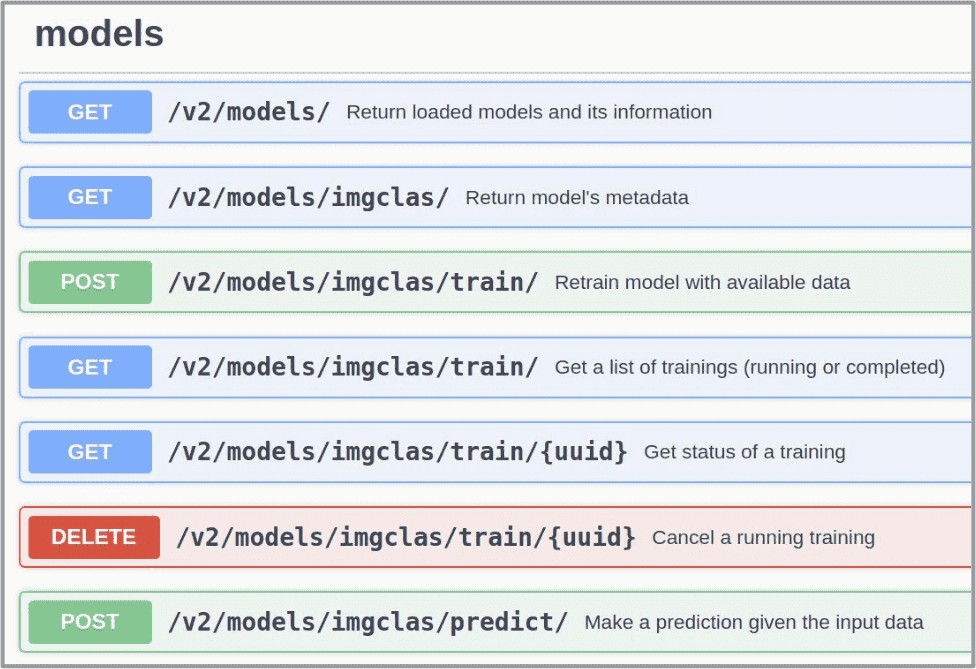

and go to http://0.0.0.0:5000/ui. You will see a nice UI with all the methods:

If running the API from inside a module’s Docker container, you can use the command:

deep-start --deepaas